Optimizing video content for social search and AI discovery is no longer optional. It is the single biggest visibility opportunity most brands are ignoring in 2026.

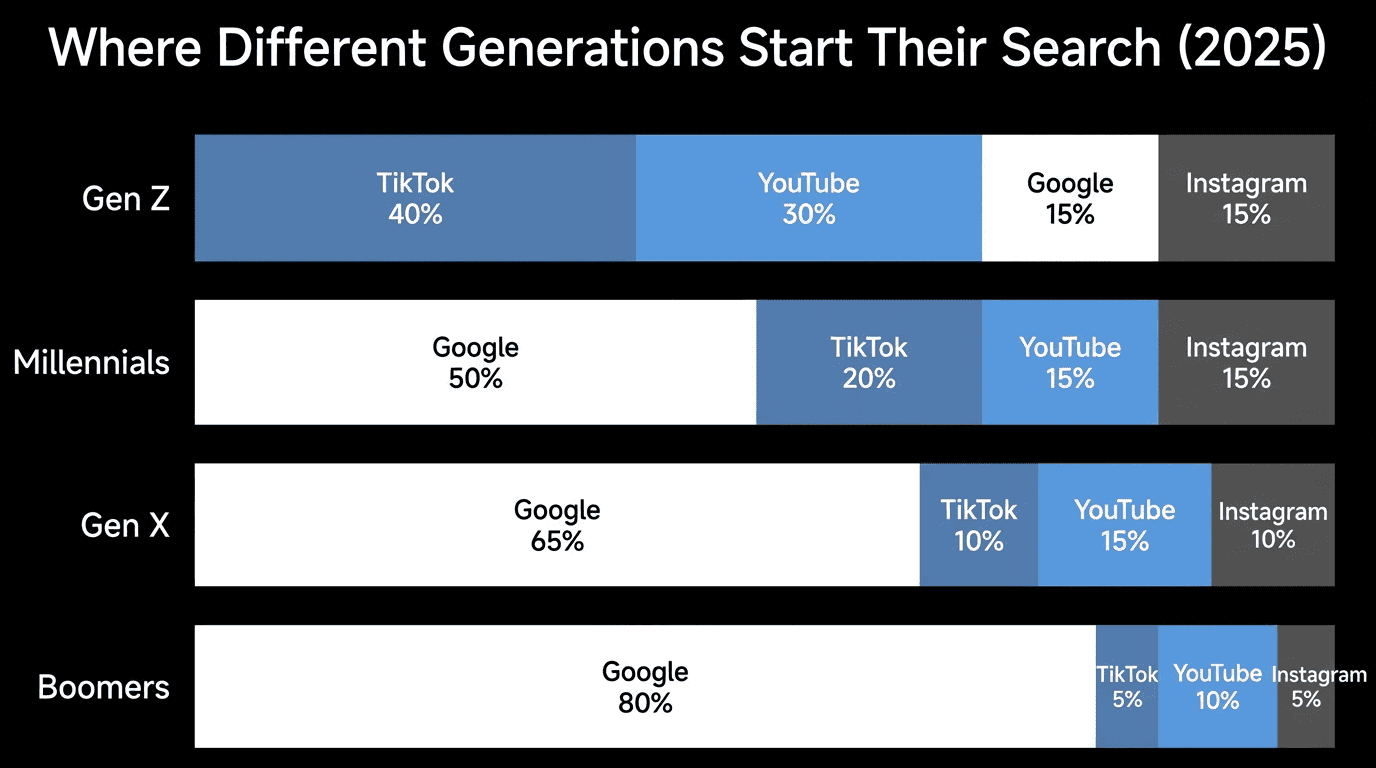

Here is the reality. 41% of Gen Z now search social platforms before they ever open Google (Sprout Social, 2025). At the same time, 810 million people use ChatGPT every day (Superlines, 2026). Your audience is not just searching in one place anymore. They are searching everywhere.

Yet most brands still optimize video for a single platform. They post to YouTube and hope for the best. They upload TikToks without thinking about searchability. And they completely ignore the AI systems that are pulling video content into generated answers millions of times per day.

This guide gives you a unified framework for making every video discoverable across social search engines, traditional search, and AI platforms like ChatGPT, Perplexity, and Google AI Overviews. All at once.

Why Video Is the New Centre of Search Discovery

Before you can optimize video for multiple channels, you need to understand where discovery actually happens now. The landscape has fractured. And the brands winning are the ones treating video as a search asset, not just a content format.

Social Platforms Are Now Search Engines

Google is no longer the default starting point for every search. That shift is not a prediction. It is happening right now.

51% of Gen Z prefer TikTok over Google as their primary search engine (Adobe, 2025). Google itself has acknowledged that nearly 40% of younger users prefer searching on TikTok or Instagram (Google, 2024). And 52% of social media users prefer social search over AI chatbots when looking for user-generated content like reviews, recommendations, and tutorials (Sprout Social, 2025).

This is not a niche behaviour. It is a fundamental change in how people find information.

The types of searches happening on social platforms break down into three categories:

"How do I" searches : tutorials, walkthroughs, and demonstrations where video is the preferred format

"Where should I" searches : local recommendations, restaurants, travel destinations, and experiences

"What is the best" searches : product comparisons, reviews, and buying decisions

Each of these search types favours video over text. And each one represents traffic that traditional search engine optimization alone will not capture.

AI Systems Are Citing Video Content at Scale

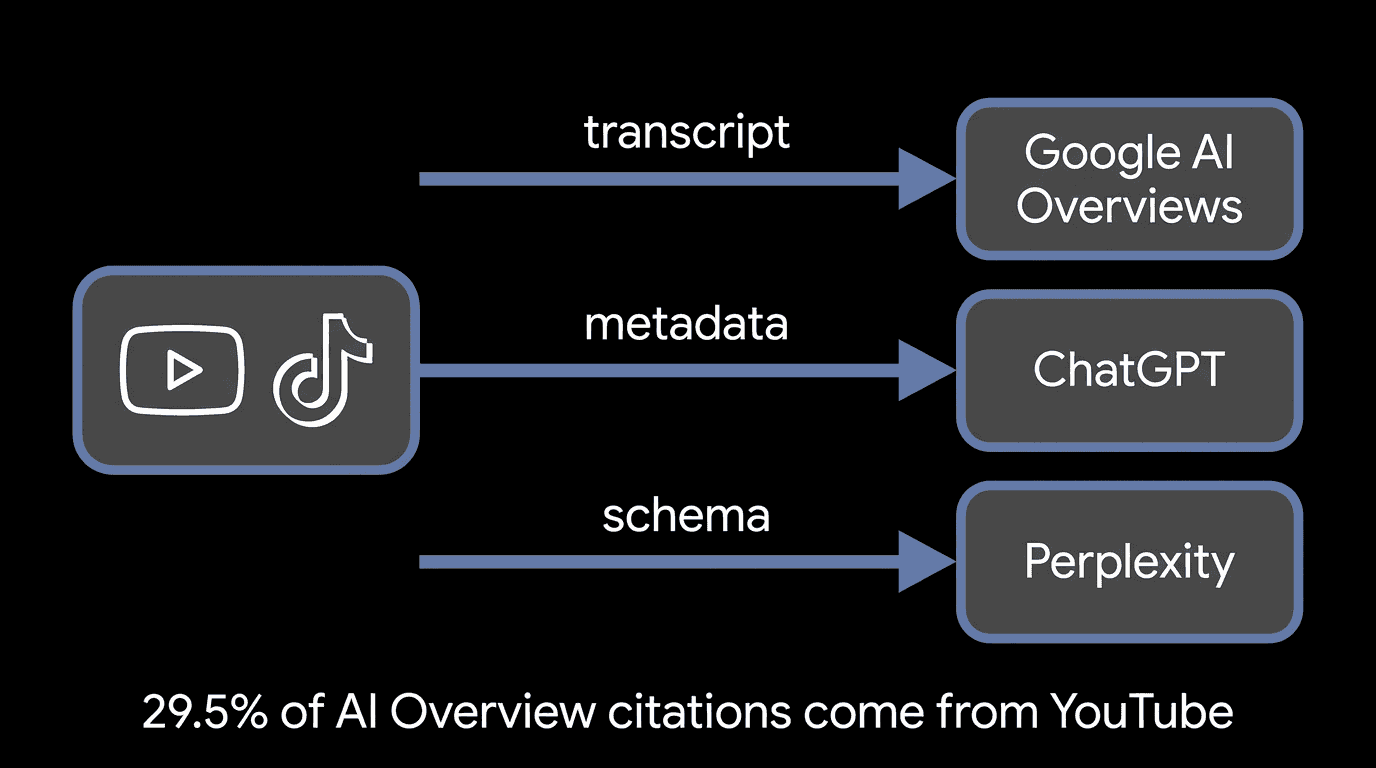

Here is the part most brands miss entirely. AI systems are not just answering questions with text. They are pulling from video content, and YouTube is leading the way.

YouTube is the single most cited domain in Google AI Overviews, accounting for roughly 29.5% of all citations (Superprompt, 2025). That is nearly one in three AI-generated answers referencing YouTube video content. Social platforms collectively account for close to 10% of all AI citations (BrightEdge, 2025).

The data gets more interesting when you factor in content format. Multi-modal content (pages combining text, images, video, and structured data) shows 156% higher selection rates for AI Overviews compared to text-only content (AI Mode Boost, 2025). Adding video to your content does not just help social search. It makes you more likely to be cited by AI.

And brands that get cited earn measurably more traffic. Cited brands earn 35% more organic clicks even as overall organic CTR drops 61% for queries with AI Overviews (Seer Interactive, 2025). Getting cited is no longer a bonus. It is the difference between visibility and obscurity.

The Revenue Case for Dual Optimization

Discovery is only half the story. The business case for AI search optimization is built on conversion rates that outpace traditional channels.

AI referral traffic converts at 14.2% compared to Google's 2.8% (Exposure Ninja, 2025). That is a 5x conversion advantage. When someone finds your brand through an AI-generated answer, they arrive with higher intent and more trust because the AI has already validated your relevance.

I have seen this first-hand. When we optimized Maple Terroir's content for AI discovery, they began showing up in ChatGPT recommendations, Gemini, and Perplexity. I tracked a $300+ order directly from ChatGPT discovery. Proving that real sales came from AI search was a first for us, and it changed how we approach every client engagement.

The generative engine optimization market reflects this shift. It was valued at $848 million in 2025 and is projected to reach $33.7 billion by 2034 at a 50.5% compound annual growth rate (Coherent Market Insights, 2025).

| Channel | Avg. Conversion Rate | Discovery Format | Effort to Optimize |

|---|---|---|---|

| Google Organic Search | 2.8% | Text links | High (established competition) |

| Social Search (TikTok, YouTube) | 3-5% | Video results | Medium (growing but less saturated) |

| AI Discovery (ChatGPT, Perplexity, AI Overviews) | 14.2% | Citations in AI answers | Medium (new, few brands optimizing) |

The brands that will win are not the ones choosing between these channels. They are the ones optimizing for all three simultaneously.

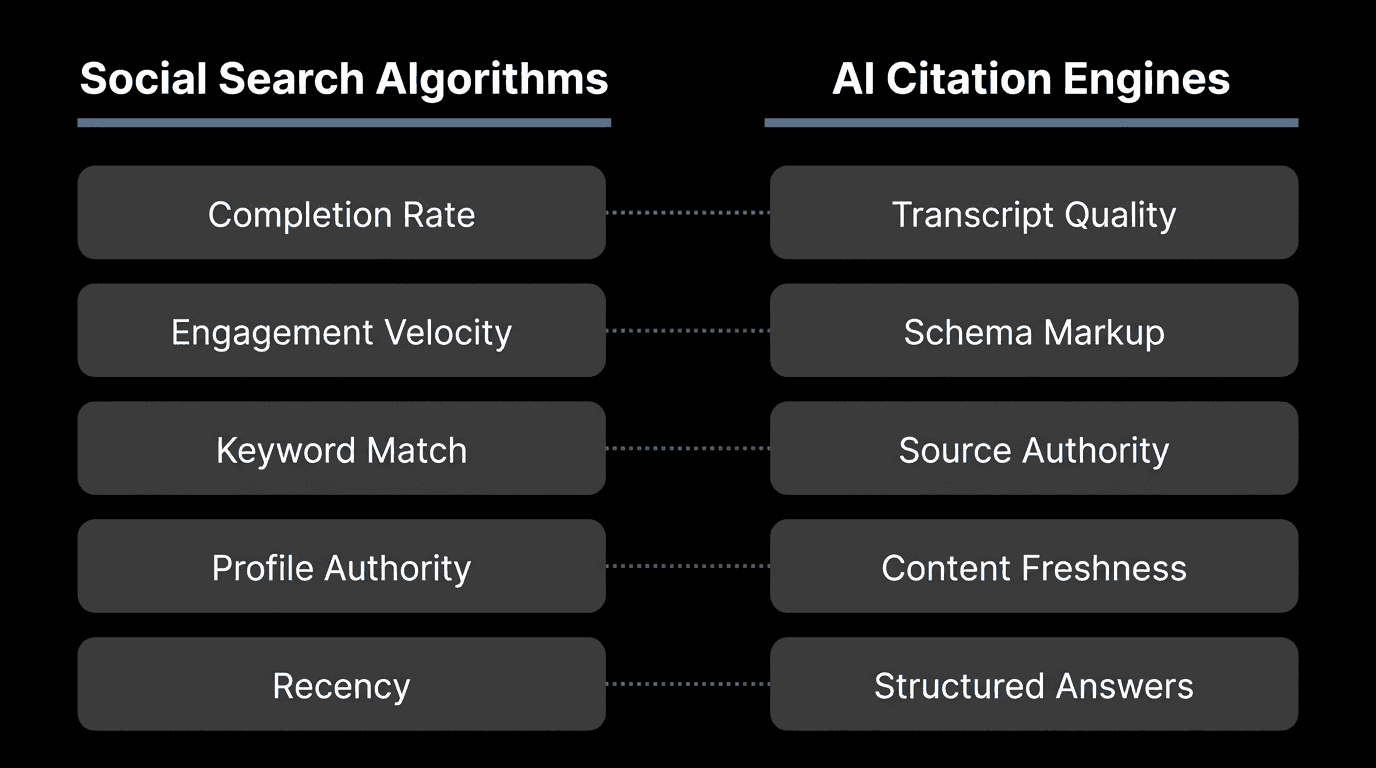

How Social Search and AI Algorithms Evaluate Video

Now that you understand where discovery happens, here is how each system decides which video content to surface. Understanding these mechanics is the foundation of every optimization decision you will make.

TikTok's Search Algorithm Signals

TikTok's search algorithm treats every video as a potential search result. Unlike YouTube, there is no distinction between "entertainment" and "search" content. Every video is indexed and ranked.

The ranking signals TikTok prioritizes for search discovery:

Caption keywords : TikTok's algorithm reads your caption text and matches it against search queries. Specific, descriptive captions outperform vague ones.

On-screen text : TikTok's AI reads text that appears in your video. This is a ranking signal most creators ignore. Keywords displayed on screen carry weight.

Spoken words : TikTok transcribes audio and uses it for search matching. Saying your target keyword in the first 5 seconds matters.

Hashtags : Function as topic categorization more than reach amplifiers. Use 3-5 specific hashtags that match search intent.

Completion rate : Videos with 70%+ completion rates gain 30% more impressions (Solveig Multimedia, 2025). Shorter videos (15-30 seconds) achieve this more easily.

Engagement velocity : Comments, shares, and saves in the first hour signal relevance to TikTok's algorithm.

The key insight: TikTok reads your video on three layers (text, audio, and visual) and matches all three against user searches. Optimizing only one layer leaves discovery on the table.

YouTube's Discovery and Search Systems

YouTube operates three separate recommendation systems, and each evaluates video differently.

YouTube Search ranks based on metadata relevance (title, description, tags), watch time, and audience retention. A video titled precisely for the query with strong completion signals will outperform a loosely related video with higher total views.

Home and Suggested rely on personalization, click-through rate, and session watch time. YouTube's goal here is maximizing how long someone stays on the platform, not just on your video.

YouTube Shorts use a completely separate algorithm that prioritizes completion rate above everything else. A 20-second Short with 90% completion outperforms a 60-second Short with 70% completion (Dataslayer, 2025). YouTube optimizes Shorts for "satisfaction per swipe," not total minutes watched.

With over 2.7 billion monthly active users watching more than 1 billion hours of video daily (Teleprompter.com, 2025), YouTube is both a search engine and a content platform. The brands that treat it as only one of those will underperform.

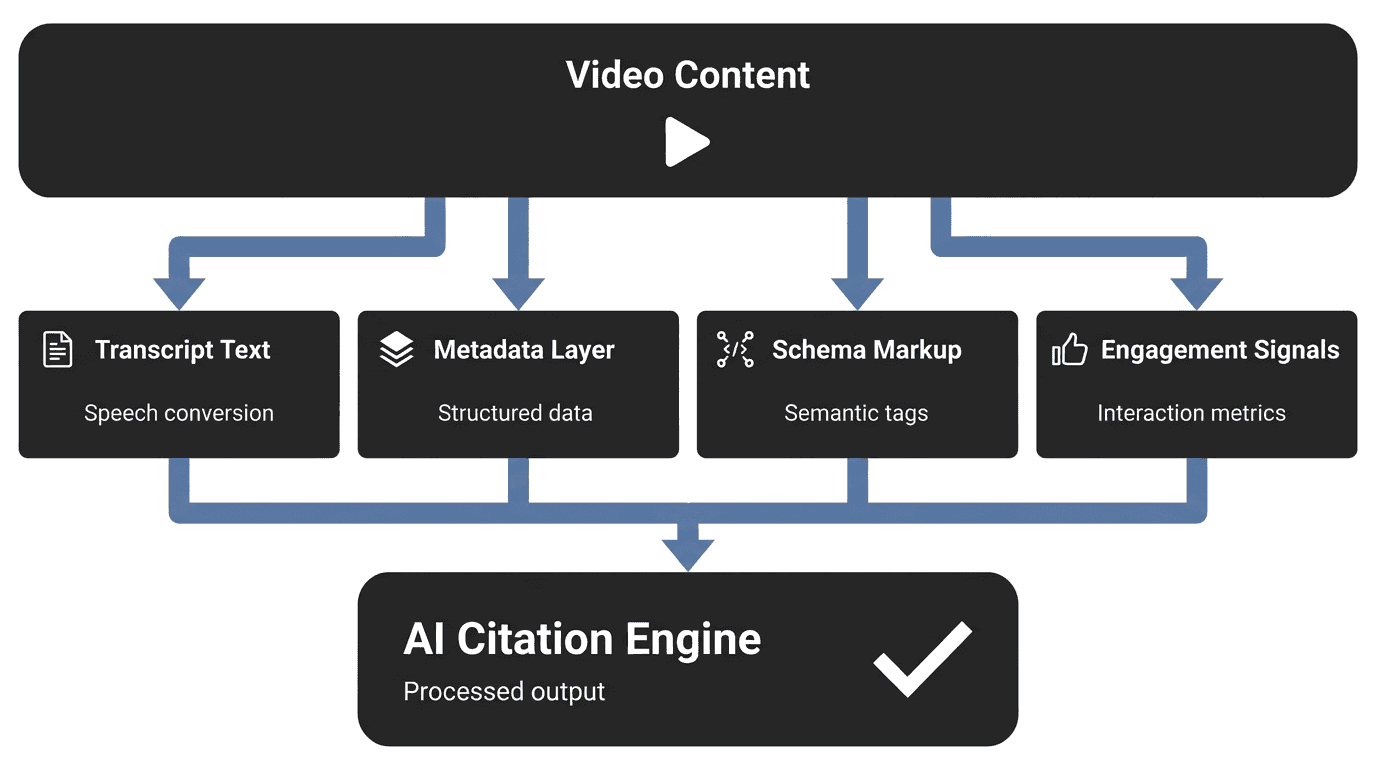

How AI Systems Read and Cite Video Content

This is the critical piece most optimization guides skip entirely. AI systems like ChatGPT, Perplexity, and Google AI Overviews do not "watch" your videos. They read them.

AI processes video content through four data layers:

Transcripts and captions : The full text of what is said in the video. This is the primary content layer AI systems extract from.

Metadata : Title, description, tags, and channel information. AI uses this to determine relevance and context.

Schema markup : Structured data (especially VideoObject schema) that tells AI systems exactly what the video covers, its duration, thumbnail, and key moments.

Engagement signals : View counts, like ratios, and comment sentiment serve as quality indicators.

Search Engine Land reported that AI systems can now deconstruct video into parallel visual, auditory, and textual streams through discrete tokenization (Search Engine Land, 2025).

The Unified Video Optimization Framework

The same foundational elements power discovery across social search, YouTube, and AI citation engines. That is not a theory. It is a pattern I see every time we implement generative engine optimization for clients.

Do not get caught up in the noise. Focus on the signal. The signal is still strong SEO fundamentals, layered with new AI-driven practices. The shift is in priorities, not in abandoning the basics.

This framework has five layers. Each one builds on the previous.

Layer 1: Keyword Research for Video Discovery

Video keyword research differs from traditional keyword research because the same topic generates different search queries on each platform. Someone searching on TikTok types differently than someone on YouTube, and both differ from how people phrase questions to ChatGPT.

Here is a practical cross-platform keyword research process:

Start with your core topic. Identify the problem your video solves or the question it answers.

Check TikTok search suggestions. Type your topic into TikTok's search bar and note the autocomplete suggestions. These reflect real user searches on the platform.

Check YouTube autocomplete. Do the same on YouTube. Note how suggestions differ from TikTok (typically longer, more specific queries).

Check Google's "People Also Ask." These questions reveal the broader informational landscape around your topic.

Run ChatGPT test prompts. Ask ChatGPT questions related to your topic and note which brands and videos it references. This reveals what AI currently considers authoritative.

Cross-reference with traditional tools. Use Ahrefs, SEMrush, or Moz to validate search volume and competition for your target terms.

| Tool | Platform Focus | Best For | Cost |

|---|---|---|---|

| TikTok Creative Centre | TikTok | Trending topics, hashtag volume | Free |

| YouTube Studio / Autocomplete | YouTube | Search volume, competition | Free |

| Google Trends | Cross-platform | Trend comparison, seasonality | Free |

| Ahrefs / SEMrush | Google + YouTube | Volume, difficulty, SERP analysis | Paid |

| AnswerThePublic | All platforms | Question-based queries | Free / Paid |

The goal is to find the overlap. When a keyword appears across multiple platforms, it signals broad demand that you can capture with one video distributed across all channels.

Layer 2: Metadata That Serves Humans and Machines

Metadata is the bridge between your video content and every discovery system. Get this right and everything else compounds. Get it wrong and even the best video stays buried.

Here is why metadata structure matters more than most people realize. 44.2% of all LLM citations come from the first 30% of text (Growth Memo, 2026). Content structured into 120-180 word sections earns 70% more AI citations than unstructured content (SE Ranking, 2025).

That means the first 100-200 characters of your video description carry more weight for AI citation than anything else on the page.

Cross-platform metadata checklist:

| Element | YouTube | TikTok | Website Embed |

|---|---|---|---|

| Title | Primary keyword in first 5 words. 60 chars max. | Primary keyword + hook. 100 chars max. | H1 tag with primary keyword. |

| Description | Keyword in first 100 chars. Timestamps. Links. 300+ words. | Keyword + context. 2-3 sentences. 150 chars optimal. | Surrounding paragraph with keyword. 200+ words. |

| Tags/Hashtags | 5-10 tags mixing broad and specific. | 3-5 specific hashtags. No generic tags. | Meta tags on page. |

| Captions | Upload SRT file with accurate transcript. | Auto-captions (verify accuracy). | Full transcript published below embed. |

| Thumbnail | Custom. Keyword context in imagery. | First frame or custom cover. | VideoObject schema thumbnailUrl. |

The principle: front-load your keywords and key information in every metadata field. Both social algorithms and AI systems weight the beginning of text more heavily than the end.

Layer 3: Engagement Signals That Compound Discovery

Engagement is not a vanity metric. It is a ranking signal on every platform and an authority signal for AI systems. The challenge is that each platform measures engagement differently.

The first 3 seconds decide everything. On TikTok, viewers swipe past in under 2 seconds if the hook fails. On YouTube, the first 30 seconds determine whether someone watches or bounces. On AI systems, engagement metrics (view counts, like ratios) serve as quality indicators.

Practical engagement tactics that work across platforms:

Open with a specific promise or surprising fact. "This one change tripled our video views" outperforms "Tips for better video."

Use pattern interrupts. Change camera angles, add text overlays, or shift pacing every 8-10 seconds to maintain attention.

Ask for specific engagement. "Tell me which method you have tried" generates more comments than "Like and subscribe."

Design for shareability. Videos that teach something useful in under 60 seconds get shared more than long-form content. Shares are the highest-weighted engagement signal on most platforms.

Build playlists and series. YouTube rewards session watch time. A viewer who watches three of your videos in a row signals higher quality than one who watches a single video.

The compounding effect matters here. Higher engagement on social platforms leads to more views, which builds domain and brand authority, which makes AI systems more likely to cite your content. Social engagement feeds AI discovery indirectly through authority signals.

Layer 4: Cross-Platform Distribution Strategy

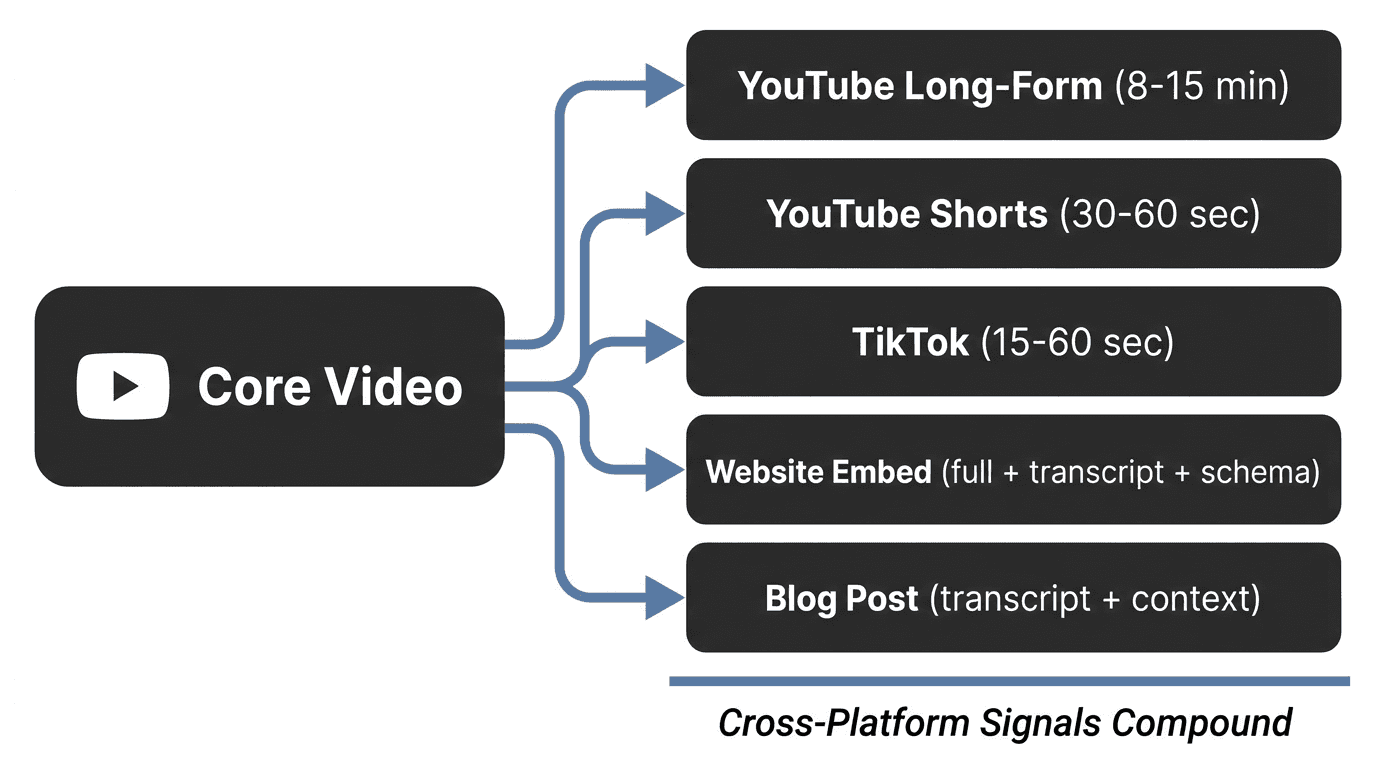

This is the layer where most brands waste the most effort. They create separate videos for each platform instead of creating one strong piece of video content and adapting it for multiple discovery channels.

Here is the distribution workflow I recommend:

Step 1: Create the long-form version first. Film a complete, in-depth video (8-15 minutes) covering your topic thoroughly. This becomes your YouTube anchor and your website embed.

Step 2: Extract short-form clips. Pull 3-5 moments from the long-form video that work as standalone short clips (15-60 seconds each). These become TikToks and YouTube Shorts.

Step 3: Publish the long-form on YouTube with full metadata optimization (keyword-rich title, front-loaded description, timestamps, tags, custom thumbnail).

Step 4: Embed on your website with the full transcript published as text on the same page, VideoObject schema markup, and 200+ words of surrounding contextual copy.

Step 5: Distribute short-form clips across TikTok and YouTube Shorts with platform-specific captions, hashtags, and hooks.

Step 6: Create a supporting blog post that expands on the video topic with additional data, examples, and internal links. Embed the video and link to it from the post.

Each touchpoint creates another signal. YouTube builds search authority. TikTok captures social search traffic. The website embed with schema creates the machine-readable layer AI systems need. The blog post provides textual context that strengthens citation probability. And brand mentions across all these platforms compound your entity recognition.

One video. Five discovery channels. That is the efficiency advantage of a unified approach.

Platform-Specific Implementation Playbooks

With the framework in place, here is exactly how to implement it on the platforms that matter most. These are tactical, step-by-step playbooks you can apply to your next video.

YouTube Optimization for Search and AI Citations

YouTube is the single highest-impact platform for video discovery because it feeds both Google search and AI citation engines simultaneously. Remember, YouTube accounts for roughly 29.5% of all AI Overview citations (Superprompt, 2025).

YouTube optimization checklist:

Title formula: Primary keyword in first 5 words + benefit or hook. Example: "Video SEO for AI Discovery: The 2026 Framework That Works"

Description structure:

First 100 characters: Primary keyword + one-sentence summary

Timestamps for all major sections (these map to schema clips)

300+ words of detailed description with secondary keywords

Links to your website, related videos, and social profiles

Tags: 5-10 tags. Mix your primary keyword, variations, and broader topic tags.

Chapters: 4-8 chapters with descriptive labels. These help both viewers and AI systems navigate your content.

Cards and end screens: Link to related videos to extend session time.

Captions: Upload a corrected SRT file. Do not rely on auto-generated captions for accuracy.

Community tab: Post about your video to drive initial engagement signals.

For Perplexity optimization specifically, Perplexity heavily references YouTube video content in its answers. A well-structured YouTube description with clear, factual statements is often pulled directly into Perplexity responses.

TikTok Optimization for Social Search

TikTok's search algorithm reads three content layers: caption text, on-screen text, and spoken audio. Optimizing all three layers gives you the strongest search signal.

TikTok search optimization checklist:

Caption: Lead with your target keyword naturally.

On-screen text: Display your primary keyword as text overlay within the first 3 seconds. TikTok's AI indexes on-screen text for search.

Spoken keyword: Say your target keyword or phrase within the first 5 seconds. TikTok transcribes audio for search matching.

Hook structure: First 2 seconds must stop the scroll. Use a question, surprising stat, or direct address ("You are making this mistake with your videos").

Completion rate: Keep videos 15-30 seconds for highest completion rates. Shorter videos achieve 70%+ completion more consistently.

Profile optimization: Include your niche keywords in your bio and username. TikTok's search weighs profile context when ranking results.

Posting timing: Publish when your audience is most active (check TikTok Analytics). Early engagement velocity affects search ranking.

Website Video Embeds for Maximum AI Extractability

This is the step most brands skip entirely, and it is the one that creates the most AI citation opportunity.

When you embed a video on your website with the right supporting elements, you create a page that AI systems can fully read, understand, and cite. Without this step, your video exists only within platform ecosystems. With it, you enter the open web where AI crawlers operate.

Website embed optimization checklist:

Embed the video on a dedicated page or blog post with a keyword-optimized H1.

Publish the full transcript below the embed as readable text. Format it with headers that match video sections.

Write 200+ words of contextual copy around the embed. This gives AI systems additional text to extract and cite.

Include an FAQ section below the video addressing related questions. FAQ sections are high-citation targets for AI.

Internal link to the page from related content on your site using descriptive anchor text.

Submit the page URL to Google Search Console immediately after publishing.

When we implement this for clients at The 66th, we consistently see pages with video embeds, transcripts, and schema earn AI citations within weeks of publishing. The combination of visual content, structured data, and supporting text gives AI systems exactly the multi-modal signal they prioritize.

For a deeper look at how we reverse engineer AI search to find these citation opportunities, our technical breakdown covers the process in detail.

Measuring Video Discovery Across Social and AI Channels

Optimization without measurement is guessing. The challenge with cross-platform video optimization is that each channel reports metrics differently, and AI citation tracking requires entirely new approaches.

Social Search Metrics That Matter

Stop tracking vanity metrics. For video discovery, these are the numbers that indicate whether your optimization is working:

| Metric | YouTube | TikTok | What It Tells You |

|---|---|---|---|

| Search impressions | YouTube Studio → Search traffic | TikTok Analytics → Traffic sources | How often your video appears in search results |

| Search CTR | YouTube Studio → Click-through rate | Not directly available | How effective your title/thumbnail is for searchers |

| Avg. completion rate | YouTube Studio → Audience retention | TikTok Analytics → Avg. watch time | Whether your content satisfies search intent |

| Search traffic % | YouTube Studio → Traffic sources | TikTok Analytics → Search vs. For You | What proportion of views come from search vs. algorithmic feed |

| Keyword rankings | Third-party tools (TubeBuddy, vidIQ) | Manual search checks | Where you rank for target terms |

The metric that matters most is search traffic percentage. If your optimization is working, the share of views coming from search (not just the algorithmic feed) should increase over time.

Tracking AI Citations for Video Content

AI citation tracking is newer and less standardized, but it is essential. 93% of AI Mode searches end without a traditional click (Semrush, 2025). If you are only tracking website visits, you are missing the majority of your AI visibility.

Here is how to track AI citations for video content:

Manual prompt testing. Regularly ask ChatGPT, Perplexity, and Gemini questions related to your video topics. Note when and how your brand or content is referenced.

Google Search Console filtering. Use regex filters to identify AI-assisted search queries that reference your video content. Our guide on finding AI search queries in GSC walks through this process.

Brand monitoring. Set up alerts for your brand name appearing in AI-generated content. Tools like Brandwatch and Mention can catch these references.

Referral traffic analysis. Track traffic from ai.google.com, chat.openai.com, and perplexity.ai in your analytics. These show direct visits from AI platforms.

Citation audits. Monthly, test 10-15 prompts related to your core topics across major AI platforms. Track changes in citation frequency over time.

When we track brand citations in ChatGPT for clients, we maintain a shared spreadsheet documenting which prompts generate citations, which do not, and how that changes month over month. This data feeds back into content optimization decisions.

Key Takeaways

Video is a search asset, not just a content format. YouTube accounts for roughly 29.5% of AI Overview citations, and 41% of Gen Z search social platforms before Google (Superprompt, 2025) (Sprout Social, 2025).

AI systems read your video through transcripts, metadata, and schema. Without these machine-readable layers, your video is invisible to AI discovery channels (Search Engine Land, 2025).

Multi-modal content wins. Pages with video, text, and structured data show 156% higher AI selection rates (AI Mode Boost, 2025).

One video should serve five channels. A unified distribution strategy (YouTube long-form, Shorts, TikTok, website embed, blog post) compounds discovery signals across every platform.

Measure what matters. Track search traffic percentage on social platforms and AI citation frequency separately from traditional analytics. 93% of AI searches produce zero clicks, so citation visibility is the metric that counts (Semrush, 2025).

Frequently Asked Questions

Does video content really affect AI search citations?

Yes. YouTube alone accounts for roughly 29.5% of all Google AI Overview citations (Superprompt, 2025). Multi-modal content (pages combining text, video, and structured data) shows 156% higher selection rates for AI Overviews compared to text-only pages (AI Mode Boost, 2025).

Which platform should I optimize video for first?

Start with YouTube if your goal is AI discovery and long-term search visibility. YouTube feeds both Google search results and AI citation engines. Start with TikTok if your audience is under 35 and you need faster initial traction through social search.

How long does it take for optimized video to appear in AI results?

Content freshness matters. Pages updated within the past 2 months earn 28% more AI citations (Superlines, 2026). Most clients see initial AI citations within 4-8 weeks after implementing schema, transcripts, and proper metadata on their video content pages.

Do I need to embed videos on my website for AI discovery?

It is strongly recommended. AI crawlers primarily index the open web, not content locked inside platform apps. Embedding your video on your website with a published transcript, VideoObject schema, and contextual copy creates the machine-readable page that AI systems can cite.

What is VideoObject schema and why does it matter?

VideoObject is a type of structured data markup (schema.org) that tells search engines and AI systems exactly what a video contains, including its title, description, duration, thumbnail, and key moments. Pages with proper schema outperform by 30% in AI citation quality (Search Engine Land, 2025).

How do I know if my videos are being cited by AI systems?

Test manually by asking ChatGPT, Perplexity, and Gemini questions related to your video topics. Track referral traffic from AI platforms in your analytics. Use Google Search Console to filter for AI-assisted queries. Monthly citation audits across 10-15 prompts will show trends over time.

Is TikTok SEO different from YouTube SEO?

Yes. TikTok search reads three content layers (caption text, on-screen text, and spoken audio) and prioritizes completion rate and engagement velocity. YouTube search prioritizes metadata, watch time, and audience retention. The core principle of keyword relevance applies to both, but the implementation differs.

AI Image Prompt: A modern, clean composition on pure black background. Centre a large play button icon rendered in minimal white line art. Surround it with five thin orbital rings in muted blue (64748b), each ring passing through a small icon representing a discovery channel: a search bar, a smartphone, a chat bubble, a globe, and a graph trending upward. Clean negative space between elements. Style: Premium tech aesthetic, flat design, minimal, high contrast. No text overlays. Wide 16:9 format optimized for blog hero display.